Birthing the Center for Population Health Sciences

Mark Cullen, MD

Birthing the Center for Population Health Sciences

Mark Cullen, MD

Birthing the Center for Population Health Sciences

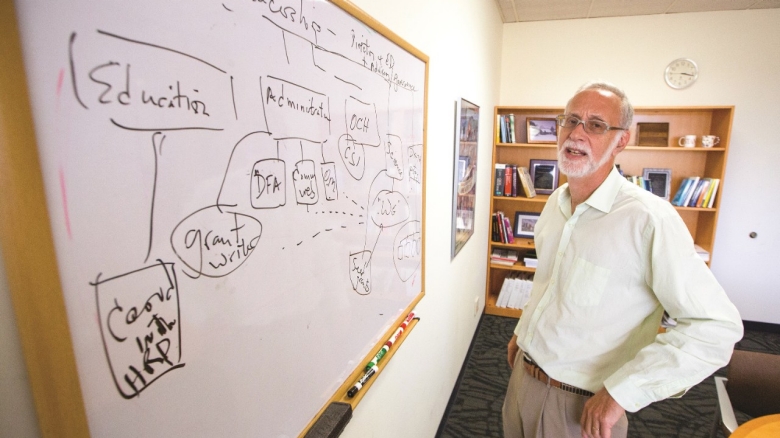

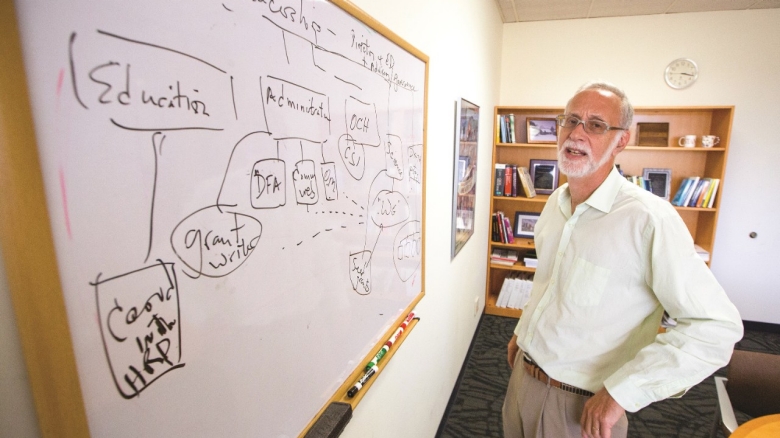

We are told to beware of moving parts, and those of us who value our digits and appendages wisely stay out of the way. In the new Center for Population Health Sciences, there are an infinite number of moving parts; standing there in the middle of them is Mark R. Cullen, MD, its director (professor, General Medical Disciplines), bringing order to this new venture.

All new academic endeavors have some similar needs: space, funds, staff, interest. The center has some of these, especially a lot of the latter. “There is incredible interest,” says Cullen enthusiastically. “We are enrolling people who are interested in being affiliate members. We already have 350 from the School of Medicine, and we anticipate another few hundred from across the campus.”

Together with Deputy Director Lorene Nelson, PhD (associate professor, Health Policy and Research), Cullen is creating “a place where health and other forms of data derived from large populations will be made accessible to Stanford faculty and staff supported by our curation services to assist investigators in finding collaborators and analytic support.”

Working Groups

The fundamental unit of these collaborations is the working group, which Cullen describes this way: “What I imagine is that each working group will attract 10 to 12 people who are really interested in a particular project and another 10 or 20 who will be bystanders, watching everything on the intranet we are building to facilitate the work before they get engaged.

“We’ve got 10 working groups that we’re about to spawn,” Cullen continues. “Each targets a problem area or phase of the life-course where there are myriad unanswered questions about the origins of health and disease. Some examples are ‘Sex Differences in Health’ or ‘Retirement, Disability, Cognitive Decline and Aging’ or ‘Immigration and Health.’ It’s hard to know how fast these and the others will gel, but I’ll be disappointed if some don’t begin to gain traction by the end of 2015.

“For every group, I’m trying to group faculty on the main campus with counterparts from the School of Medicine so that there are at least two very distinctive perspectives about what’s important, and different research approaches.”

We are told to beware of moving parts, and those of us who value our digits and appendages wisely stay out of the way. In the new Center for Population Health Sciences, there are an infinite number of moving parts; standing there in the middle of them is Mark R. Cullen, MD, its director (professor, General Medical Disciplines), bringing order to this new venture.

All new academic endeavors have some similar needs: space, funds, staff, interest. The center has some of these, especially a lot of the latter. “There is incredible interest,” says Cullen enthusiastically. “We are enrolling people who are interested in being affiliate members. We already have 350 from the School of Medicine, and we anticipate another few hundred from across the campus.”

Together with Deputy Director Lorene Nelson, PhD (associate professor, Health Policy and Research), Cullen is creating “a place where health and other forms of data derived from large populations will be made accessible to Stanford faculty and staff supported by our curation services to assist investigators in finding collaborators and analytic support.”

Working Groups

The fundamental unit of these collaborations is the working group, which Cullen describes this way: “What I imagine is that each working group will attract 10 to 12 people who are really interested in a particular project and another 10 or 20 who will be bystanders, watching everything on the intranet we are building to facilitate the work before they get engaged.

“We’ve got 10 working groups that we’re about to spawn,” Cullen continues. “Each targets a problem area or phase of the life-course where there are myriad unanswered questions about the origins of health and disease. Some examples are ‘Sex Differences in Health’ or ‘Retirement, Disability, Cognitive Decline and Aging’ or ‘Immigration and Health.’ It’s hard to know how fast these and the others will gel, but I’ll be disappointed if some don’t begin to gain traction by the end of 2015.

“For every group, I’m trying to group faculty on the main campus with counterparts from the School of Medicine so that there are at least two very distinctive perspectives about what’s important, and different research approaches.”

Raw Materials

Some working groups have great ideas but limited access to data or populations ideal for study. Cullen has an answer: “We’ve already bought a big commercial claims set; we are negotiating with the Centers for Medicare and Medicaid Services to buy the Medicare set; and there are literally dozens of fabulous data sets around campus, including the Federal Research Data Center, that need only new coordination to become a researcher’s dream.”

Some more ambitious projects with groups both local and global are also underway. For example, Cullen points to the INDEPTH dataset, about which “we are actually sending a group to meet in Addis.” INDEPTH has surveillance and demographic data on 52 discrete, large populations (10 to 300,000 people each) in 52 Southeast Asian and African countries. He continues: “A core agreement to facilitate exchange with the Danish Registries and Biobank has been executed and three pilot projects have been launched; we are having ongoing discussions with Santa Clara County to develop a health information exchange that will link electronic medical records on almost all county residents irrespective of which health care they use, and further link these to population-level data at the County Health Department. Recently we received expressions of interest from both Singapore and Taiwan about collaborating with their national health authorities, gaining access to additional data troves.”

Cullen cautions that “some of these projects will take several years to mature, but that’s the whole point. We want them to mature under the watchful guidance of the working groups so that people can mold what might come from them.”

Cullen also has plans to support the working groups in novel ways. For instance, “When our intranet is up and going, we will start a resource exchange where people can post projects, ideas, opportunities for postdocs, requirements for a research assistant, etc. A student seeking a particular type of research experience could post that, hoping a faculty member might say, ‘oh great, a student with nothing to do; just what I need for the new study….’“

As grants are funded and donations received, Cullen will achieve another goal: “Someday I’d like to say to the leaders of the working groups, ‘here is $5,000 or $10,000 to help you grow; here’s a full-time staffer to help you write grants; here are two postdoc stipends; here’s a stipend for a visiting scholar to come work with your group.’”

Space

For most academic centers, space is right up there with money as the biggest concern. So too for Cullen. “A lot of working group faculty have no proximity to each other. What would be truly fantastic would be if we had a building, where people in working groups could use a chunk of space; where, for example, every Friday morning the working group on ‘The First 1000 Days of Life’ could meet. There would be hotelling space, good coffee, and quiet group work areas.”

Staffing

The center will not have a huge staff. Cullen explains: “I imagine we will eventually have 10 or 15 faculty who get some support from the center and a professional staff of another 20 people. We are shortly merging with the Office for Community Health, which already has a staff of 10. It will be the feet-on-the-ground link with the community health centers nearby, plus it will drive some of the education around population health.”

Funding

The Center received its initial operating budget from The Stanford Center for Clinical and Translational Research, Stanford Health Care, and the Dean of the School of Medicine, along with a future allocation of space and resources to attract new and promising faculty. The challenge is to develop a stream of revenue from grants, and through philanthropy raise the resources needed to become a sustainable fixture.

“We are trying to write some grants which themselves could generate immediate payback in terms of resources,” says Cullen. “For example, we are responding to a request for applications from the National Institute for Minority Health and Health Disparities to develop a center focused on using tools of precision health to address health disparities. If we’re successful, that would produce substantial resources to jump-start several working groups, including one on ‘Health Disparities’ and another on ‘Gene-Environment Interactions,’ as well as the Office for Community Health.”

It is obvious this is a work in progress, with many moving parts and uncertainties. But the director of this center has dreams and enthusiasm and plans to make it all come true. “It’s exciting precisely because it’s not all pat and set in stone,” he says. “There’s so much opportunity for innovation, for experimentation, and for leadership and members alike to shape and mold those future dreams.”

Raw Materials

Some working groups have great ideas but limited access to data or populations ideal for study. Cullen has an answer: “We’ve already bought a big commercial claims set; we are negotiating with the Centers for Medicare and Medicaid Services to buy the Medicare set; and there are literally dozens of fabulous data sets around campus, including the Federal Research Data Center, that need only new coordination to become a researcher’s dream.”

Some more ambitious projects with groups both local and global are also underway. For example, Cullen points to the INDEPTH dataset, about which “we are actually sending a group to meet in Addis.” INDEPTH has surveillance and demographic data on 52 discrete, large populations (10 to 300,000 people each) in 52 Southeast Asian and African countries. He continues: “A core agreement to facilitate exchange with the Danish Registries and Biobank has been executed and three pilot projects have been launched; we are having ongoing discussions with Santa Clara County to develop a health information exchange that will link electronic medical records on almost all county residents irrespective of which health care they use, and further link these to population-level data at the County Health Department. Recently we received expressions of interest from both Singapore and Taiwan about collaborating with their national health authorities, gaining access to additional data troves.”

Cullen cautions that “some of these projects will take several years to mature, but that’s the whole point. We want them to mature under the watchful guidance of the working groups so that people can mold what might come from them.”

Cullen also has plans to support the working groups in novel ways. For instance, “When our intranet is up and going, we will start a resource exchange where people can post projects, ideas, opportunities for postdocs, requirements for a research assistant, etc. A student seeking a particular type of research experience could post that, hoping a faculty member might say, ‘oh great, a student with nothing to do; just what I need for the new study….’“

As grants are funded and donations received, Cullen will achieve another goal: “Someday I’d like to say to the leaders of the working groups, ‘here is $5,000 or $10,000 to help you grow; here’s a full-time staffer to help you write grants; here are two postdoc stipends; here’s a stipend for a visiting scholar to come work with your group.’”

Space

For most academic centers, space is right up there with money as the biggest concern. So too for Cullen. “A lot of working group faculty have no proximity to each other. What would be truly fantastic would be if we had a building, where people in working groups could use a chunk of space; where, for example, every Friday morning the working group on ‘The First 1000 Days of Life’ could meet. There would be hotelling space, good coffee, and quiet group work areas.”

Staffing

The center will not have a huge staff. Cullen explains: “I imagine we will eventually have 10 or 15 faculty who get some support from the center and a professional staff of another 20 people. We are shortly merging with the Office for Community Health, which already has a staff of 10. It will be the feet-on-the-ground link with the community health centers nearby, plus it will drive some of the education around population health.”

Funding

The Center received its initial operating budget from The Stanford Center for Clinical and Translational Research, Stanford Health Care, and the Dean of the School of Medicine, along with a future allocation of space and resources to attract new and promising faculty. The challenge is to develop a stream of revenue from grants, and through philanthropy raise the resources needed to become a sustainable fixture.

“We are trying to write some grants which themselves could generate immediate payback in terms of resources,” says Cullen. “For example, we are responding to a request for applications from the National Institute for Minority Health and Health Disparities to develop a center focused on using tools of precision health to address health disparities. If we’re successful, that would produce substantial resources to jump-start several working groups, including one on ‘Health Disparities’ and another on ‘Gene-Environment Interactions,’ as well as the Office for Community Health.”

It is obvious this is a work in progress, with many moving parts and uncertainties. But the director of this center has dreams and enthusiasm and plans to make it all come true. “It’s exciting precisely because it’s not all pat and set in stone,” he says. “There’s so much opportunity for innovation, for experimentation, and for leadership and members alike to shape and mold those future dreams.”